This post is outdated.

Check out what's new:

How NetEase integrated Inworld in Cygnus Enterprises, Part 2

Team Miaozi (NetEase) walks through how they integrated Inworld into Cygnus Enterprises’ in this two part series. Part 2 focuses on narrative design.

This is part two of our two-part series on Team Miaozi’s (NetEase) implementation of Inworld in their Action RPG Cygnus Enterprises. In the first part, we wrote about the game play and technical challenges they faced and the choices they made. In this part, we’ll dive into how Inworld AI shifted how the Cygnus Enterprises team tackled narrative design.

Cygnus Enterprises is a top-down shooter that combines the gameplay of an Action RPG with base management elements. Set in the future, humanity has spread out to nearby solar systems and is trying to build up settlements.. The player takes on the role of a contractor and is supported by an AI companion powered by Inworld named PEA (short for Personal Electronic Assistant).

In this article, we’ll tackle how AI NPCs affect how studios have to think about narrative design and the choices Team Miaozi made. You can also check out our Case Study to learn more about why Team Miaozi chose the Inworld Character Engine to power their NPCs.

A new type of narrative design

AI NPCs are set to change how game narratives are created and experienced. While some worry that AI NPCs will spell the end of game writers and narrative designers, it might be surprising to learn that Team Miaozi’s writer was the one who lobbied hardest to add Inworld to Cygnus Enterprises.

“I’m not worried about AI NPCs. The AI doesn't magically know about the missions or the game setting,” said Eva Jobse. “A writer still has to set all of that up behind the scenes.”

What Eva found, however, was that the writing process shifted with Inworld. “Setting up smart AI NPCs with Inworld changed the writing process significantly,” she explained. “Rather than writing each distinct dialogue, it felt like I'd been put in the director's chair, outlining what I was looking for in broad strokes through adjusting character traits and motivations. I had to convey the key points of the world building and story to the AI and then let the AI take off from there.”

After that, there was also work needed to refine the characters. “There was a lot of testing and tweaking involved to ensure the output matched my vision and that, in the end, the AI characters displayed strong and unique personalities with different interests and conversation styles,” Eva explained.

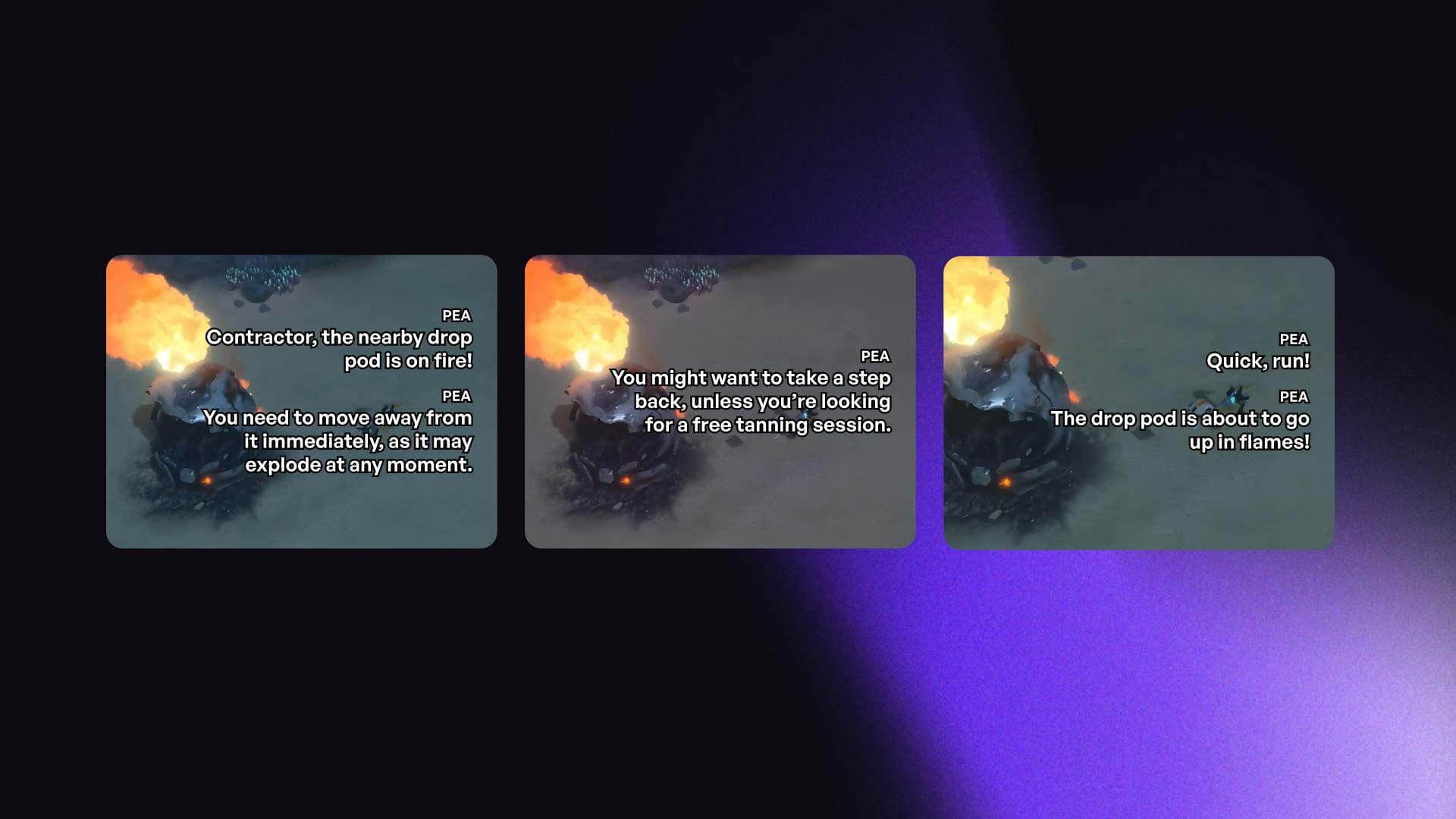

The outcome of directing rather than writing scenes is that NPCs use the personality they’re given to improvise in ways that will surprise even the developers. “When you just start the game, PEA warns you that the drop pod is about to explode,” explained Brian, “but you don’t know what she’ll say. She could just tell you to run away or, since she’s very sassy and sarcastic, she might tell you that you should walk away now if you don't want a free tanning session. This makes it fun to replay the game, as the experience will always be different.”

Studios can implement AI NPCs similarly to how Team Miaozi did where they’ll have two dialogue systems but even when using Inworld alone, narrative designers can write and program NPCs to say pre-written dialogue verbatim in key scenes.

Using Scenes to manage NPCs' knowledge

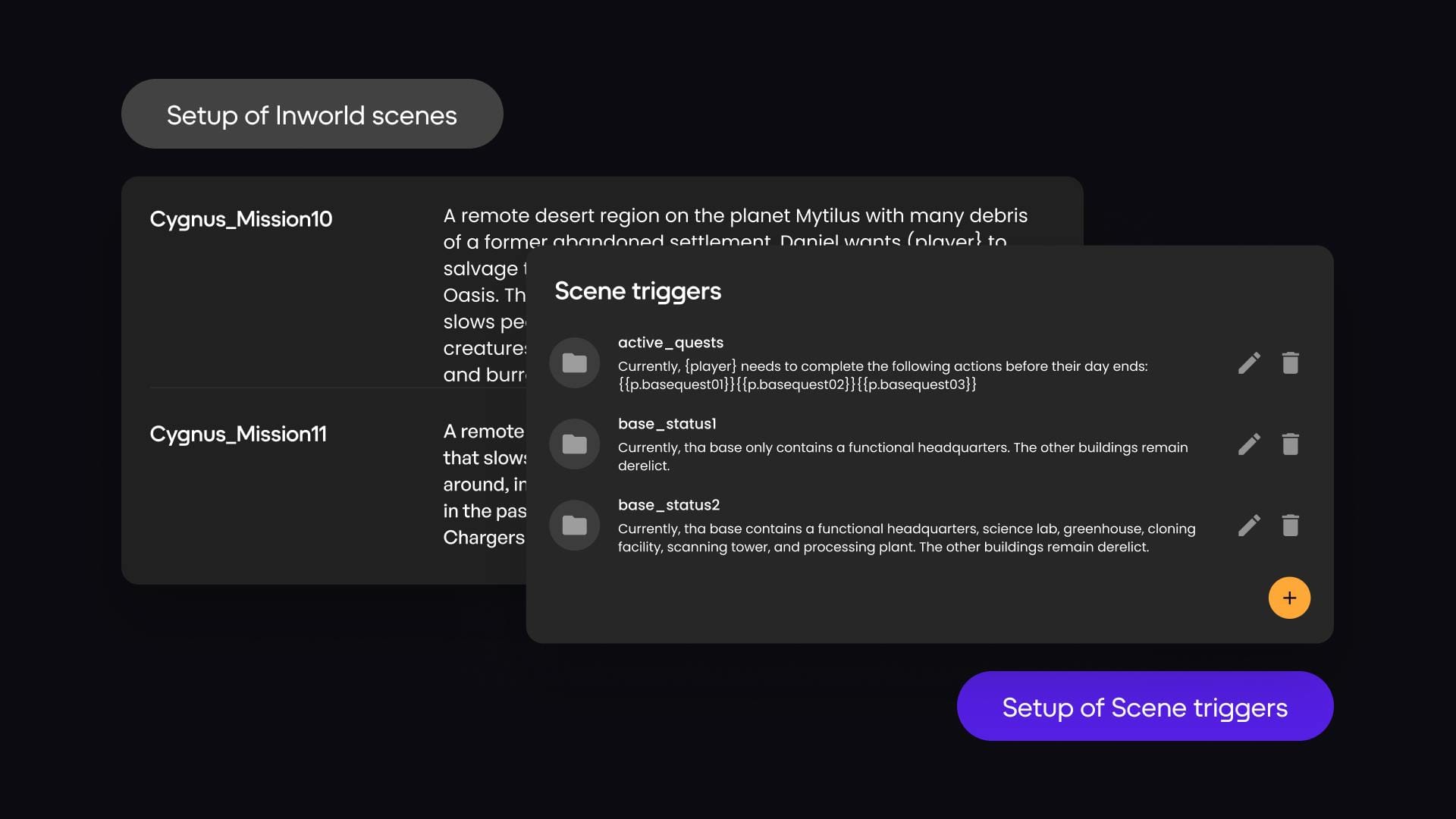

Inworld's Scenes feature is a powerful tool that allows developers to ensure that their NPCs don’t break the fourth wall by providing NPCs with critical context around what’s happening in the scene. Cygnus Enterprises, however, used the Scenes feature in an innovative way: to ensure PEA acquired knowledge over time in the game. That way, she wouldn’t get confused about what scene she was in, what she knew at that stage of the game, and what was happening in the game world at that time.

“We started by creating a map of the game world,” explained Brian Cox, Lead Programmer on Team Miaozi. “This map included all the different locations in the game, as well as the relationships between those locations. We then linked each Unity scene to a scene in Inworld. This allowed us to control what information was available to each version of PEA based on her location in the game world.”

By designing their versions of PEA this way, PEA’s knowledge of the game world would be different depending on which story mission the player was currently on.

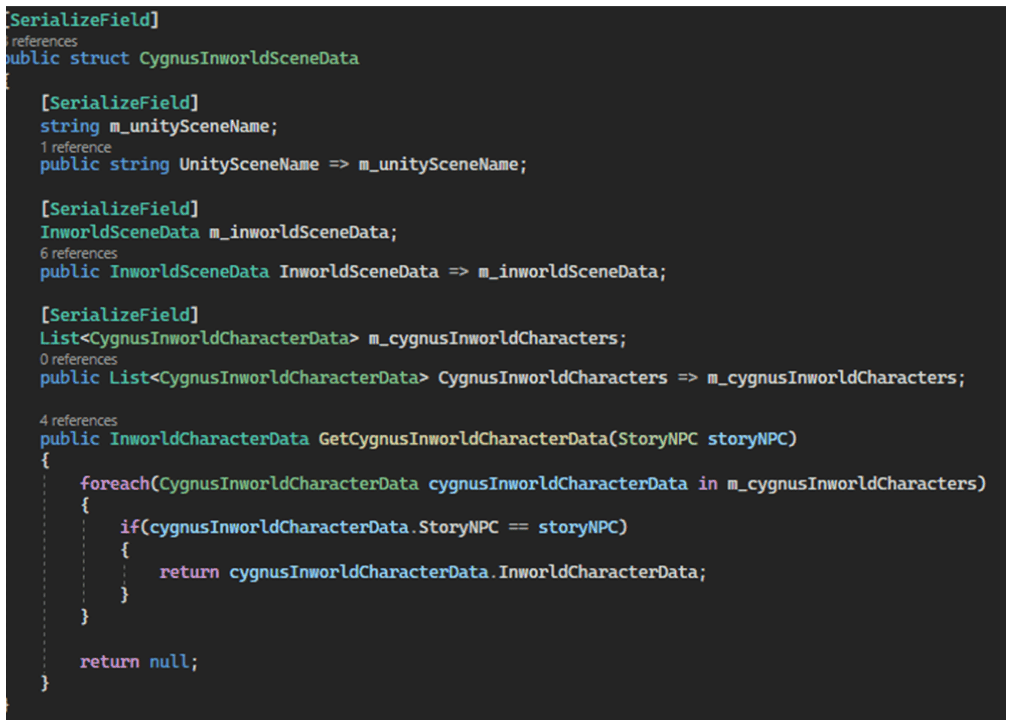

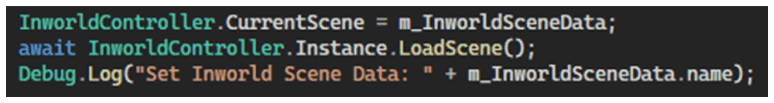

While there was significant data management work in setting up the different versions of PEA, it was simple from a technical perspective to set up in Unity. “Whenever we had scene loads,” explained Brian, “we would load the Inworld scene along with all the characters in it and then the character would have a slightly different brain with information about what just happened or what will happen in the scene in question. We simply took out the old character brain and put in the new one.”

While this was a great workaround, Inworld is currently developing a technical solution to this. “Our next version of the character brain will be able to acquire knowledge over time on its own,” said Nathan Yu, Director of Product at Inworld. “This will eliminate the need to create multiple versions of the same character as NetEase did, as the character brain will be able to adapt to different situations and learn from its experiences.”

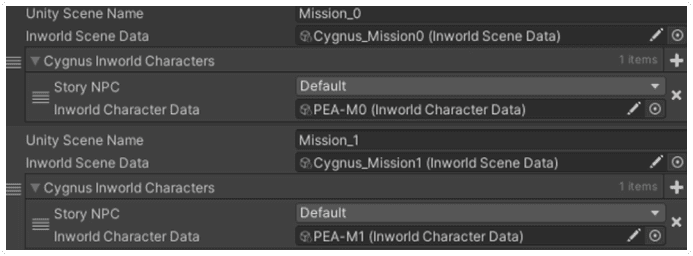

Here’s how they set it up:

The first image shows the hierarchical structure Team Miaozi created where one Unity Scene contains multiple Inworld NPCs, along with the Inworld Scene they are situated in.

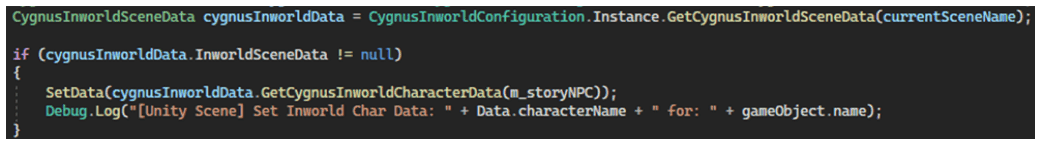

They use this data structure to load a character called PEA_M1 when a player plays a specific level, like Mission 1, as shown in the diagram. In their studio panel, they also set up an Inworld Scene called "Cygnus_mission1," which includes the character PEA_M1.

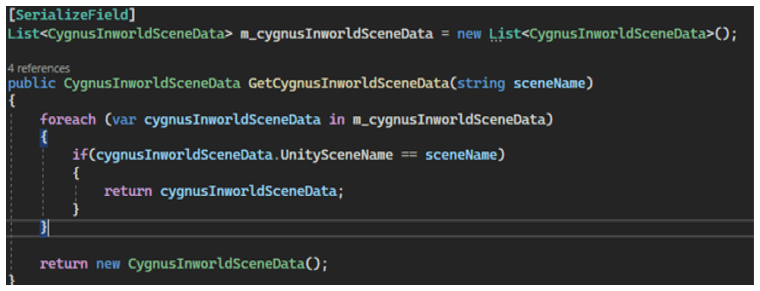

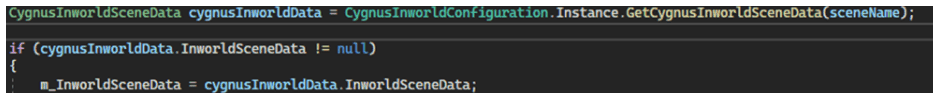

The code below illustrates the data structure they defined: CygnusInworldSceneData.

It contains a Unity Scene, an Inworld Scene, a List for storing Inworld Character data, and a function for how to retrieve this character data and how to call the function.

Safety

Safety is often a concern of studios considering implementing AI NPCs since large language models can be unpredictable. If AI NPCs are not properly moderated, they could say or do things that are offensive or harmful which could damage IP. This is especially important in games that are played by children or young adults.

Inworld uses a variety of configurable techniques to ensure that NPCs are safe with multiple redundancies so that game developers don’t have to worry about what their NPCs will say.

“We offer developers the ability to configure their own safety settings based on what works best for their game,” said Nathan. “If they want to filter out swear words or mentions of alcohol and drugs, they can do so. But if they’re creating an experience aimed at adults, they can drop the safety guardrails for that.”

Team Miaozi opted for ensuring that characters were able to handle inappropriate topics without derailing the conversation. “ I configured the characters to be able to handle inappropriate topics in a soft manner," explained Eva. "Instead of the NPCs refusing to answer the question, they are configured to try to steer it back into a game-appropriate. That way it comes across as a personal preference of the character remaining in their designated role, rather than a hard guardrail that kills the conversation and interferes with the game experience."

Conclusion

Despite having to approach narrative design for Cygnus Enterprises differently, Team Miaozi feels like it was more than worth it. “The AI NPCs in our game are much more realistic and engaging,” Brian said.

For more information about why they implemented AI NPCs and the impact they had on player engagement, see our Case Study on Cygnus Enterprises. Ready to integrate Inworld yourself? Get started in our Studio.