Inworld Agent Runtime

Build realtime voice and chat agents for demanding applications. Integrated metrics and experiments to optimize for user outcomes. Deploy to hosted API endpoints or integrate via SDKs.

Realtime agents built for scale

Build with production-grade orchestration and rapid inference.

Why Inworld Agent Runtime

Your users deserve the best quality, availability and speed

Exceptional Quality

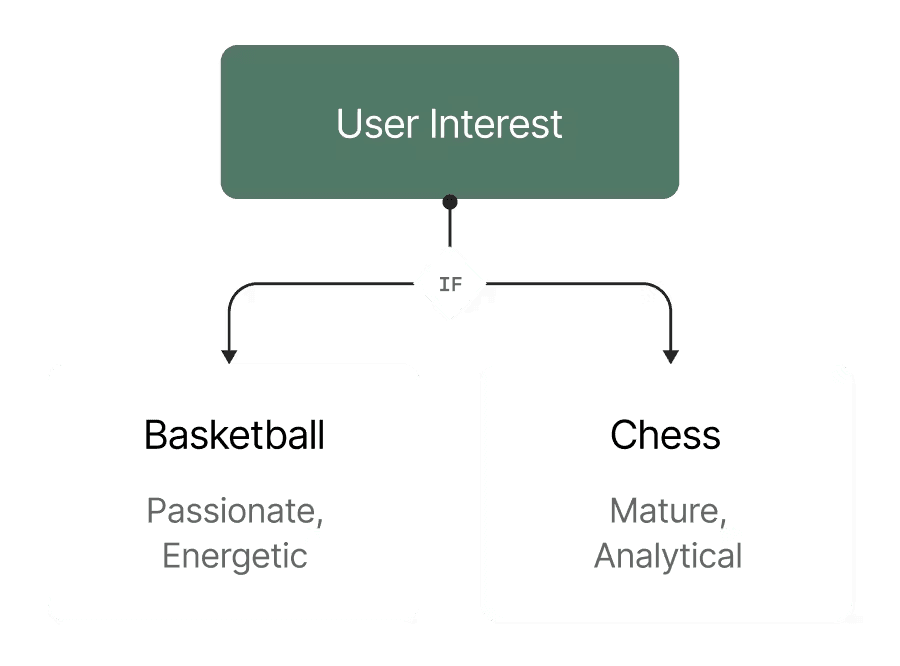

Serve personalized models and prompts to delight every user.

High Availability

Automatic failovers prevent downtime from outages and rate limits.

Ultra Low Latency

Lightning-fast execution that scales seamlessly from 10 to 10M users with minimum code changes.

Proven Results

Built for every consumer AI application - from social apps and games to learning and wellness.

Bible Chat

Increased voice AI feature engagement and reached millions

Built for every consumer AI application

Agent Runtime scales consumer AI, driving experiences from social apps and games to learning and wellness.

Get started

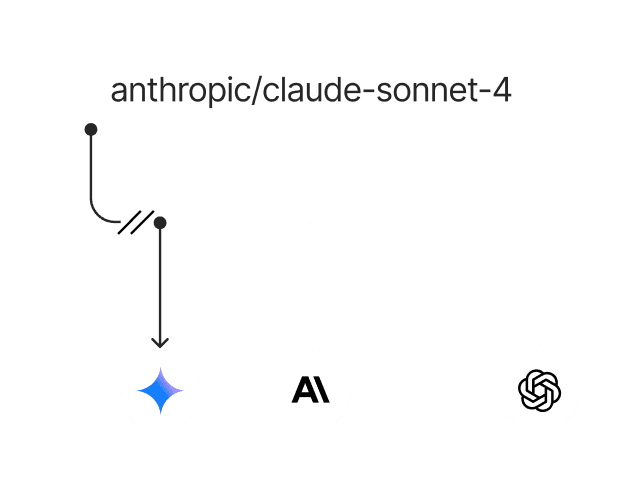

Inworld easily integrates with any existing stack or provider (Anthropic, Google, Mistral, OpenAI, etc.) via one API key.

Available to everyone now.

FAQ

The Inworld Agent Runtime uniquely combines

lightning-fast C++ core for realtime multimodal conversational interactions.

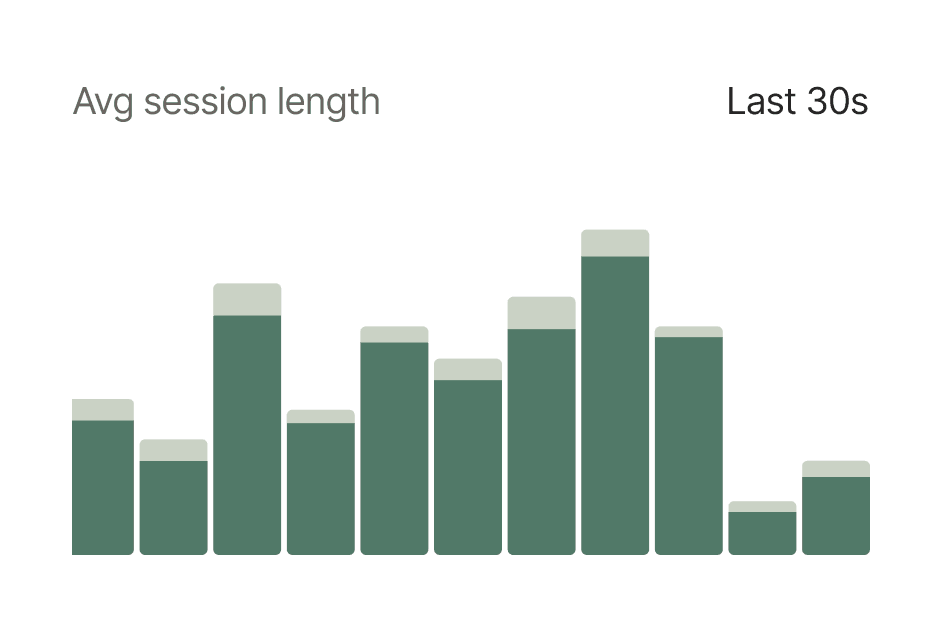

built-in telemetry for deep user insights (traces & logs).

live A/B testing to accelerate improvements to the end user experience.

Yes, Agent Runtime is free. Consumption of models is the only thing you pay for. Agent Runtime itself incurs no cost or license fee. Learn more about model pricing here.

Follow our quick start guide to deploy a realtime conversational AI endpoint in 3 minutes - Then integrate into your app.

Inworld Agent Runtime is specifically designed for developers building realtime conversational AI and voice agents that scale to millions of concurrent users.

Use cases include language tutors, social media, AI companions, game characters, fitness coaches, social media, shopping agents, and more.

Yes, you can use the Inworld CLI to deploy a hosted endpoint that can be easily called by any part of your existing stack.

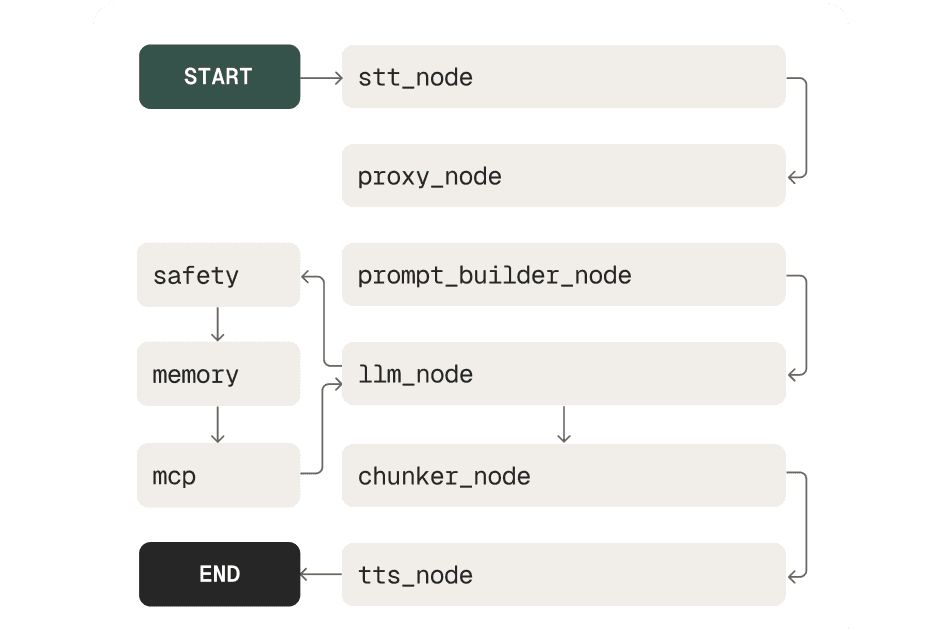

Developers get a full suite of pre-optimized nodes to construct any real-time AI pipeline that can scale to millions of users, including nodes for

- model I/O (STT, LLM, TTS)

- data engineering (prompt building, chunking)

- flow logic (keyword matching, safety)

- external tool calls (MCP integrations)

- and more