Why Inworld + NLX for Voice+ AI

- Conversations that differentiate brands: Build, deploy, and analyze voice applications that solve for any use case in any industry with NLX, including contact center automations, AI assistants, and integrations with the most common digital channels (e.g., messaging apps, voice assistants).

- Low latency: Inworld voices support ~200ms latency to the first audio chunk, enabling engaging use cases for consumer applications in entertainment, hospitality, travel, retail, and more.

- Multimodal made easy: Voice and digital channels operate in real-time synchrony with NLX patented Voice+ technology, creating a natural and seamless conversational experience most like talking to a human.

- Multilingual: Build agents in 11 of the most common languages for consumer applications, including English (with its various accents), Chinese, Korean, Dutch, French, Spanish, and more.

- Scale affordably: SOTA-quality voices for just $15/1M characters (TTS 1.5-Mini), 75% cheaper than other providers, so you can build interactive experiences that scale and evolve with users' preferences and behaviors.

- Zero-shot voice cloning: Leverage Inworld's voice cloning capabilities to bring characters, brands, and assistants to life with emotion and personality using just 5-15 seconds of audio.

How it works

- Select Inworld as your preferred TTS provider within the NLX platform ‘Integrations’ and configure your voice preferences.

- Use Inworld’s API or embed Inworld voices via NLX’s voice gateway to enrich any step of the customer journey in your application. You can learn how here: NLX Integration Documentation

Interested in learning more about Realtime TTS?

Inworld x NLX collaboration

Built to accelerate our internal development, now enabling all consumer builders

“We built Runtime because existing tools couldn't deliver at the speed and scale our partners required. When we realized every consumer AI company faces these same barriers, we knew we had to open up what we'd built. Thousands of builders are hitting the same scaling wall we did, so we hustled over the past year to create the universal backend to accelerate the entire consumer AI ecosystem.”

Evgenii Shingarev, VP of Engineering

We learned the three factors determining leaders in consumer AI

-

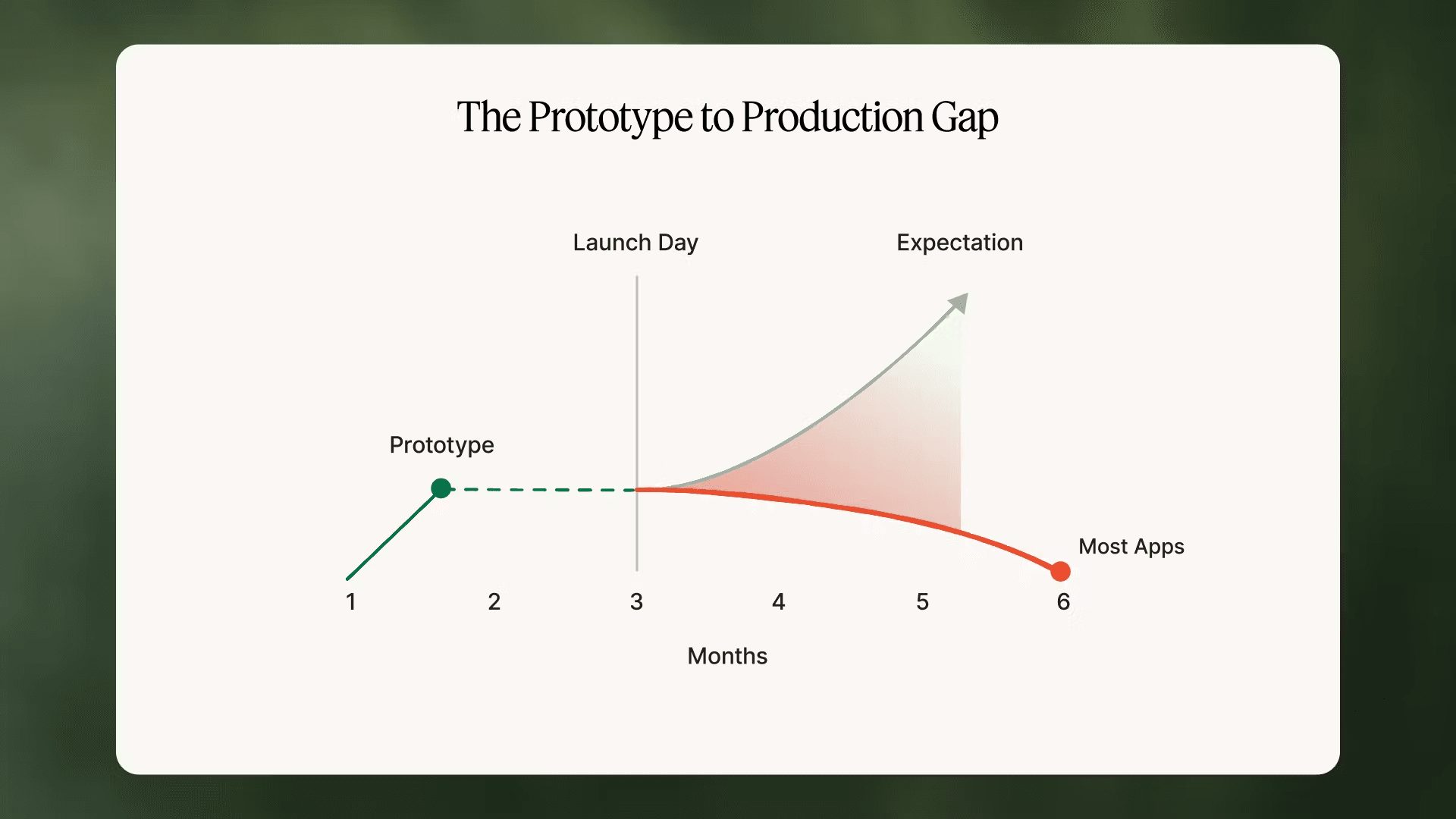

Time from prototype to production: While creating an AI demo takes hours, reaching production-readiness typically requires 6+ months of infrastructure and quality improvement work. Teams must handle provider outages, implement fallbacks, manage rate limits, provision and accelerate compute capacity, optimize costs, and ensure consistent quality. In building with category leaders, we saw how most consumer AI projects either make the leap or they stall out and die in the gap between prototype and scalable reality.

-

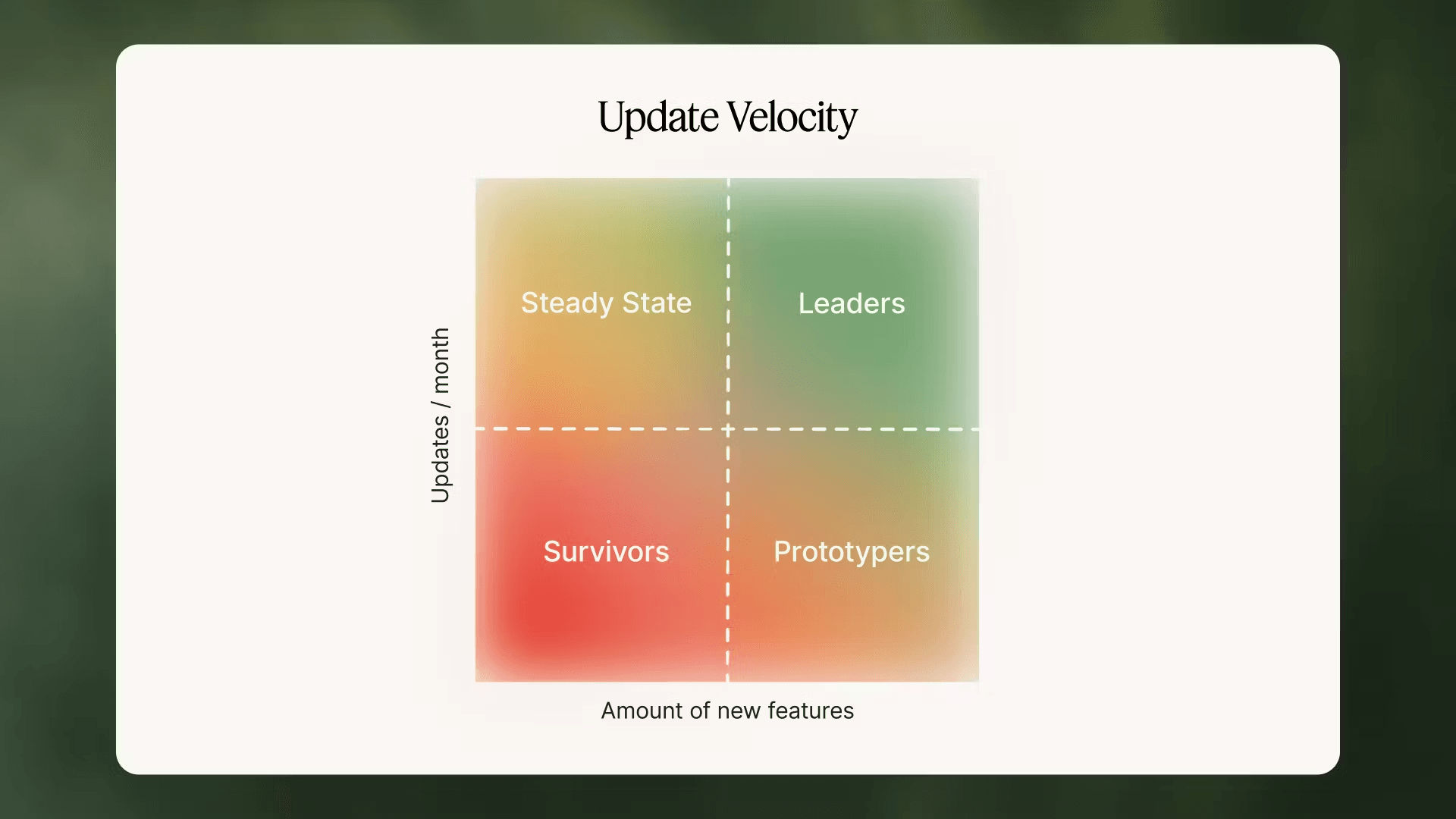

Resource allocation to new product development: Most engineering teams spend over 60% of their time on maintenance tasks: debugging provider changes, managing model updates, handling scale issues, and optimizing costs. This leaves minimal resources for building new features, causing products to stagnate while competitors advance. We experienced this firsthand, as even innovative teams get trapped in maintenance cycles instead of building what users want next.

- Experimentation velocity: Consumer preferences continuously evolve, but traditional deployment cycles of 2–4 weeks cannot match this pace. Teams need to test dozens of variations, measure real user impact, and scale winners — all without the friction of code deployments and app store approvals. Working with partners across the industry showed us that the fastest learner wins, but existing infrastructure makes rapid iteration nearly impossible.

“We scaled from prototype to 1 million users in 19 days with over 20× cost reduction”

Fai, Status CEO

Inworld Runtime’s technical design

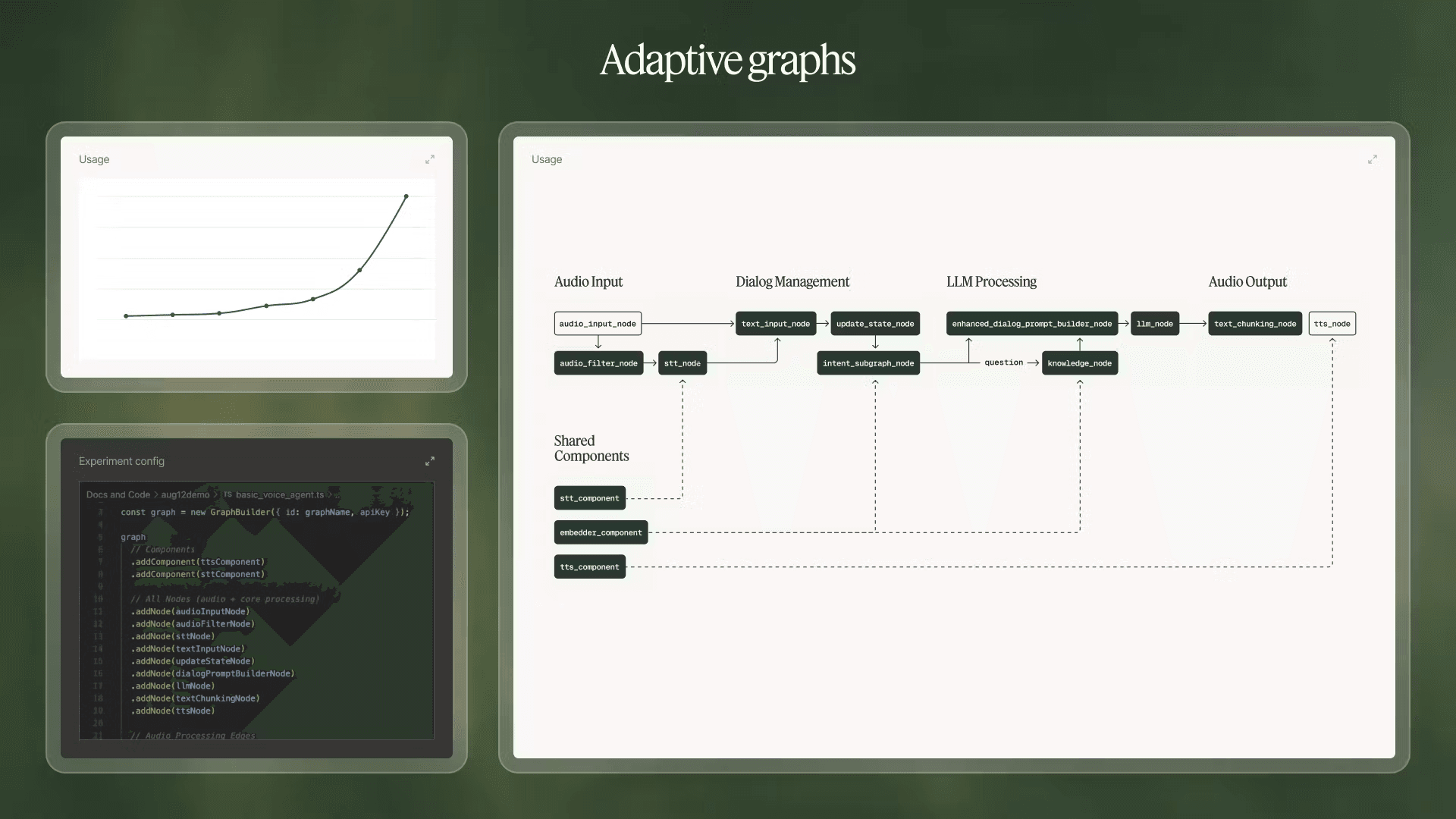

- Adaptive Graphs: A C++ based graph execution system that solves scaling cross-platform limitations faced by most AI frameworks, with SDKs for Node.js, Python and others. Developers compose applications using pre-optimized nodes as building blocks (with APIs from top providers for LLM, TTS, STT, knowledge, memory and much more) that handle low-level integration work and automatically optimize data streams between components. The same graph seamlessly scales from 10 test users to 10 million concurrent users with minimal code changes and managed endpoints. With vibe-coding friendly interfaces this directly enables the leap from prototype to production in days, not months.

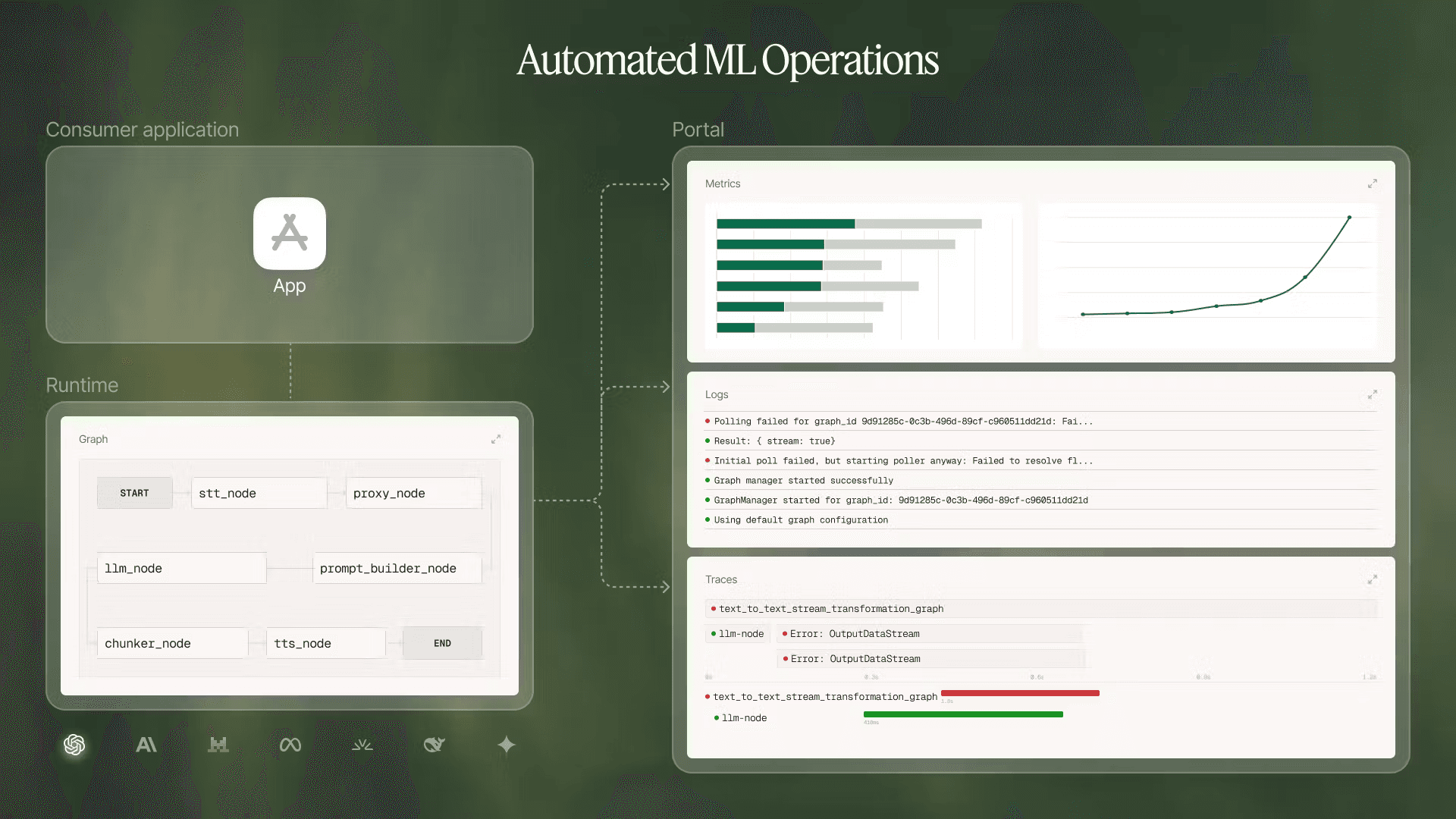

- Automated MLOps: Beyond basic operations, Runtime provides self-contained infrastructure automation with integrated telemetry capturing logs, traces, and metrics across every interaction. Actionable insights, such as identifying bugs, user patterns, and optimization opportunities, are surfaced through the Portal, our observability and experiment management platform. Runtime performs automatic failover between providers, manages capacity across models, and handles rate limiting intelligently. It also supports custom on-premise deployments with optimized model hosting for enterprises. As applications scale, we provide access to all necessary cloud infrastructure to train, tune, and host custom models that break the cost-quality frontier of default models.

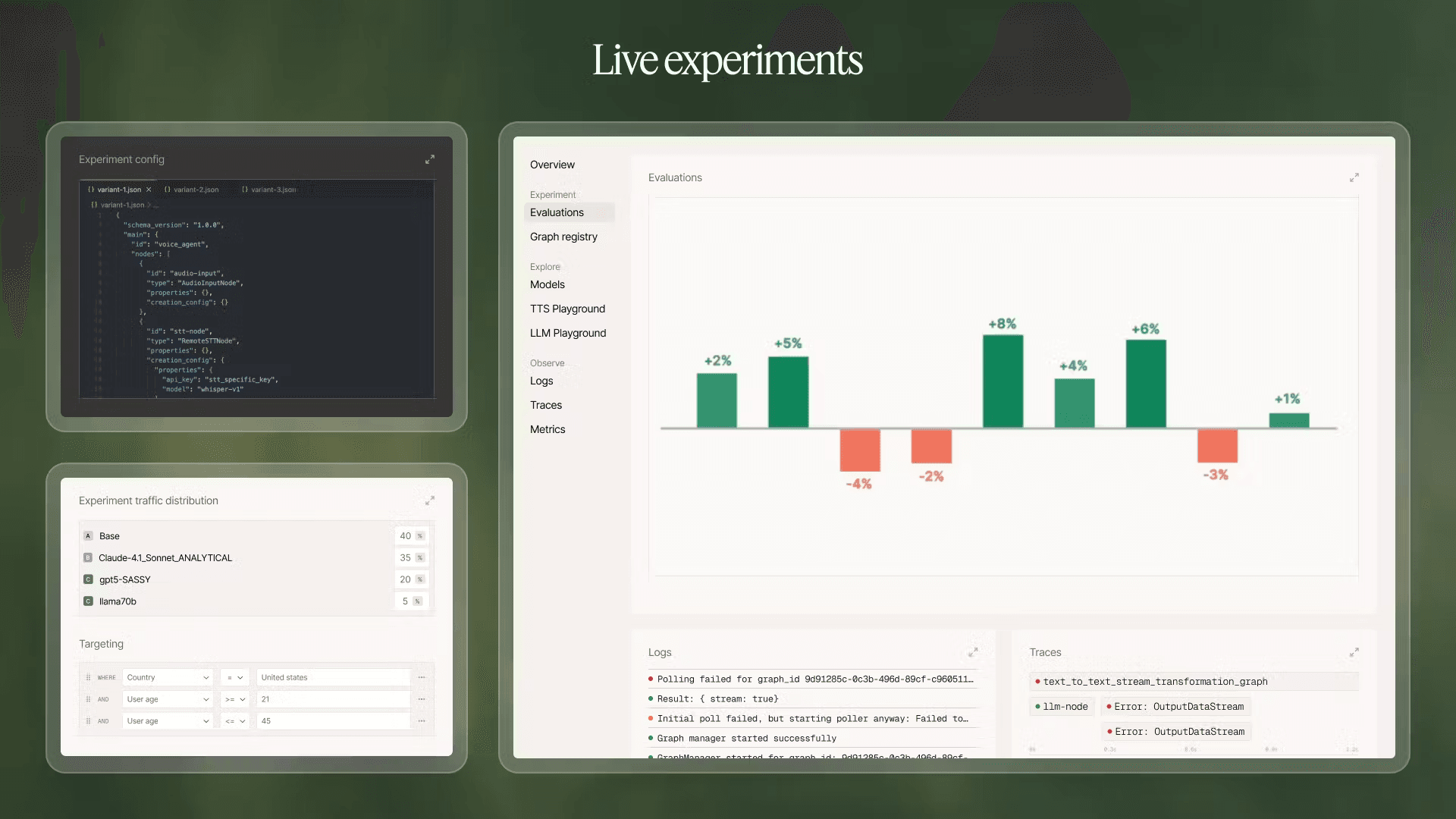

- Live Experiments: One-click to deploy or scale experiments. Configuration separated from code enables instant A/B tests without deployment friction. Runtime can automatically run hundreds of experiments simultaneously by defining variants via SDK and managing tests through the Portal, testing different models, prompts, graph configurations, and logic flows. Changes deploy in seconds with automatic impact measurement on user metrics.

Proven results from early adopters of Inworld Runtime

- Our largest partners (major IP owners, media companies and AAA studios) are already leveraging Runtime as the foundation of their AI stacks

- Wishroll scaled from prototype to 1 million users in 19 days with over 95% cost reduction

- Little Umbrella is able to ship new AI games while they use Inworld to reduce update and maintenance effort for existing titles

- Streamlabs built a multimodal real-time streaming assistant with features that were unfeasible even six months ago

- Bible Chat upgraded and scaled their voice features while reducing voice costs by 85%

- Nanobit delivers personalized AI narratives to millions at sustainable unit economics