Published 03.26.2026

Best Realtime APIs for Voice AI

Summarize with:

Voice AI is becoming the foundation for how users interact with AI agents. It's replacing typed inputs and batch-processed audio with continuous streaming conversations. For developers building realtime voice agents, wiring together different speech to text and text to speech models is difficult.

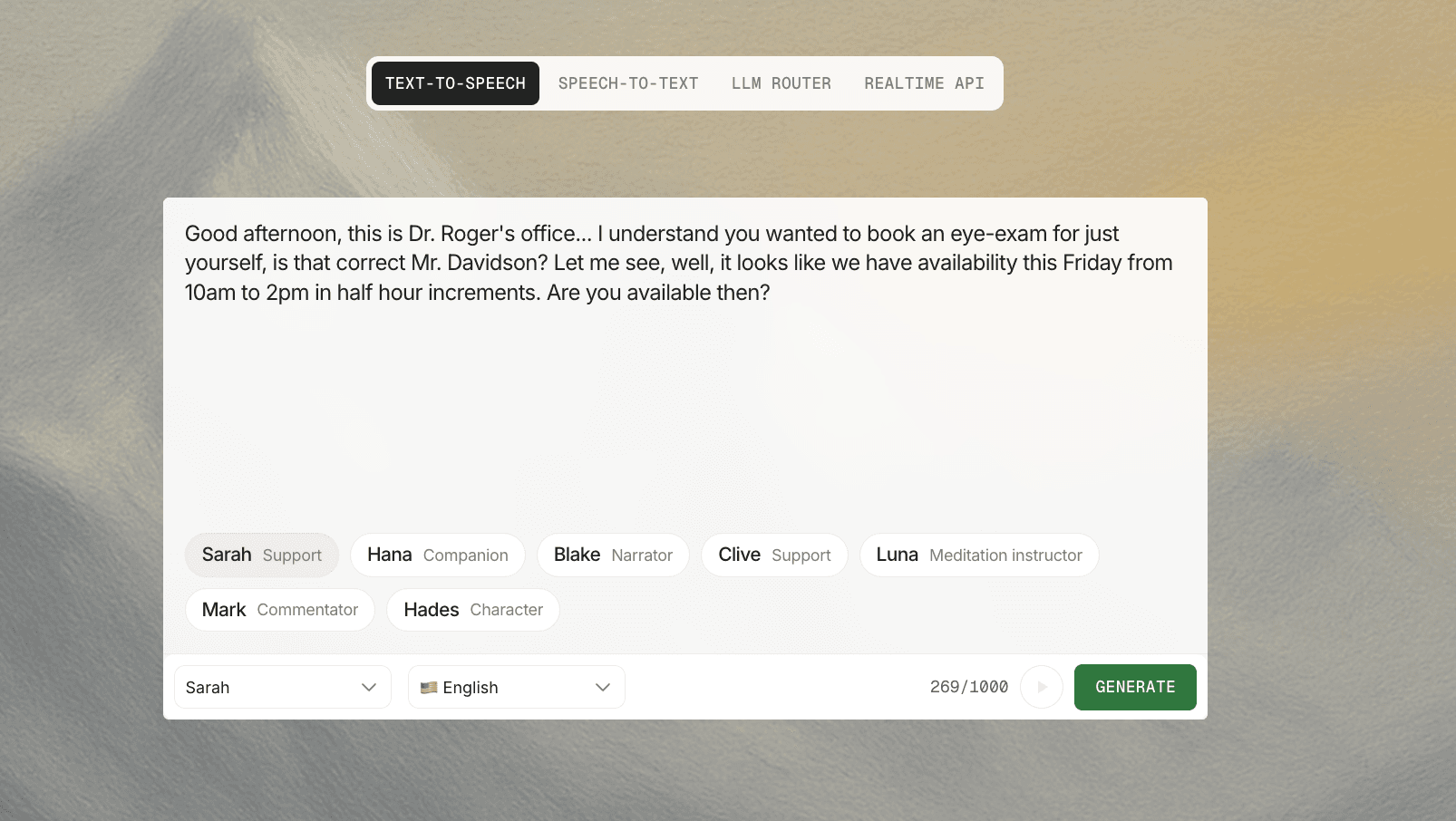

Realtime APIs make building voice AI substantially easier by providing an API that accepts speech input live and returns speech output. Currently, there are only two viable realtime APIs for taking speech input and returning speech output in realtime: the Inworld Realtime API and OpenAI Realtime API. In this post, we'll compare and contrast the two leading Realtime APIs.

What is a Realtime Voice API?

Realtime voice APIs accept speech as an input and return speech as an output. The API streams audio as the user speaks, detects when the user's turn is complete, generates a response through a language model, synthesizes speech, and streams audio back, all within a window tight enough that the exchange feels like a conversation.

The defining characteristic is streaming, not speed alone. A fast request-response system that waits for the user to finish speaking, transcribes the full utterance, generates a complete text response, synthesizes the entire audio clip, and then plays it back will feel sluggish even if each individual step is quick. Realtime systems overlap these stages so the user hears the first audio output while the model is still generating and the TTS engine is still synthesizing.

Realtime vs turn-based voice systems

Turn-based voice pipelines process audio in discrete batches. The user speaks, the system waits for silence, transcribes everything, calls a language model, synthesizes a full audio response, and plays it. Each step finishes before the next begins. The result is a noticeable gap between the user's last word and the agent's first, and no ability to interrupt the agent mid-response.

Streaming voice AI pipelines overlap these operations. STT emits partial transcripts while the user is still speaking. The language model can begin generating tokens as soon as the turn boundary is detected. TTS starts synthesizing from the first tokens of the response rather than waiting for the full text. Synthesis can begin before the complete input text is available, reducing latency and enabling real-time voice interaction.

What "Realtime" Means in Practice

The word "realtime" is overused and under-defined. For voice AI evaluation, these are the metrics that anchor the term in measurable outcomes.

Time to first audio is the delay from the end of user speech (or from a response trigger) to the first audible output frame reaching the user. This single metric captures the perceived speed of the system better than any aggregate latency number.

End-to-end latency is the total delay from user speech to agent speech, including capture, processing, synthesis, and playback. Turn detection latency measures how quickly the system decides the user has finished speaking. Slow turn detection adds dead air before the agent responds, and aggressive turn detection cuts users off mid-sentence.

Barge-in (or interruption handling) determines whether the system can stop speaking and react when the user starts talking over it.

Jitter tolerance measures how well the system handles variable network conditions without audible glitches or broken turn-taking. A realtime voice API that performs well on a stable local network but degrades over cellular connections has a jitter tolerance problem.

How to evaluate a realtime voice API

Architecture knowledge is useful, but teams also need a structured way to compare platforms. The evaluation criteria below translate the pipeline concepts above into a buyer checklist.

Speech recognition and turn detection

Check whether the STT engine emits partial transcripts as audio streams in, or only returns results after detecting silence. Partial transcripts are what enable the pipeline overlap that makes streaming voice AI fast.

Evaluate the VAD options. Can you configure endpointing sensitivity? Does the platform support semantic VAD that considers the meaning of what the user said, or only energy-based silence detection? Test in noisy environments, because VAD that works well in a quiet room may cut users off in a car or open office.

Language model responsiveness and tool use

First-token latency from the language model is the biggest variable in many pipelines. Ask whether the platform supports streaming model output so TTS can begin before the full response is generated. If your application requires function calling or tool use, test whether the model can invoke tools without blocking the audio stream.

Interruption recovery matters here too. When a user barges in mid-response, can the model cleanly abandon its current generation and start over with the new context, or does the system finish generating the old response before processing the interruption?

Text-to-speech quality and speed

Time to first audio at the TTS stage deserves by far the most scrutiny. Ask for P90 benchmarks instead of averages. Streaming TTS output is non-negotiable for low-latency voice AI, because waiting for full synthesis before playback adds hundreds of milliseconds.

Voice quality and controllability also matter for production deployments. Can you adjust speaking rate, pitch, or emotional tone? Does the voice sound natural across long utterances, or does quality degrade on responses longer than a few sentences?

Orchestration, observability, and deployment

Routing, A/B testing, and multi-agent support determine how flexibly you can iterate once your voice agent is live. Inworld's Realtime API (currently in research preview) includes router support for handling different user cohorts and testing configurations, alongside automatic interruption handling and turn-taking as documented features.

Observability is often the gap between a demo and a production system. Can you access per-stage latency telemetry? Can you replay sessions for debugging? Compliance and data residency requirements may also constrain your deployment options, so check whether the platform supports your target regions and data handling policies.

Protocol compatibility deserves specific attention. If your team has already prototyped on the OpenAI Realtime event schema, platforms that follow the same protocol reduce migration effort. Inworld's migration documentation positions the API as an OpenAI Realtime-compatible system with extensions for additional customization, which lowers the switching cost for teams already using that event model.

Best APIs and platforms for realtime voice AI

1. Inworld

Inworld's Realtime API is a full-stack speech-to-speech platform that covers every stage of the realtime voice AI pipeline in one API endpoint. The API accepts audio from the user, runs speech recognition, voice activity detection, and turn boundary detection. The audio is interpreted by an LLM with customizable system prompts and tools before being streamed back to the client as synthesized speech, with each step managed in the same API by Inworld without requiring external orchestration middleware or custom glue code between stages.

The Inworld Realtime API follows the OpenAI Realtime protocol, which means teams already familiar with OpenAI's event schema (events like session.update, input_audio_buffer.append, response.create, and response.done) can integrate with Inworld using the same client code patterns. Inworld extends the base protocol with additional capabilities, including router support for directing different user cohorts to different agent configurations, semantic VAD with configurable eagerness for smarter turn detection, and dynamic session control that lets developers adjust voice settings, model parameters, or VAD sensitivity mid-conversation without disconnecting. These extensions do not break compatibility with existing OpenAI-shaped clients, so migration from OpenAI's Realtime API to Inworld requires minimal changes.

Both WebSocket and WebRTC are supported as first-class transports, documented with separate quickstart guides and sharing the same event model. WebRTC is the path for browser-native voice experiences where low-latency media handling, adaptive bitrate, and built-in jitter buffering matter. WebSocket is the path for server-side orchestration, telephony bridges, and use cases that need explicit event-level control over every message in the session. Because the event schema is consistent across both transports, teams can run hybrid architectures (WebRTC on the client, WebSocket on the backend) without maintaining separate codepaths or rewriting application logic when switching transports.

Interruption handling and turn-taking are automatic, first-class features rather than behaviors teams need to build on top of the API. Setting interrupt_response: true enables barge-in so the agent stops speaking and begins processing new input when the user talks over it. Turn detection supports both semantic VAD (which considers the content of the partial transcript to make smarter endpointing decisions) and energy-based VAD, with the eagerness parameter giving developers direct control over the tradeoff between fast response times and premature cutoffs. Router support allows A/B testing of voice models, VAD settings, prompt variations, or entirely different agent configurations at the API level, without deploying separate infrastructure per variant.

For technical evaluators comparing realtime voice APIs, this combination of pipeline coverage, protocol compatibility, dual transport support, built-in conversational mechanics, and experimentation infrastructure is what separates Inworld from both component-level APIs (which cover one stage) and simpler speech-to-speech surfaces (which may lack orchestration, routing, or transport flexibility). The API is currently in research preview, with quickstart guides, API reference, and examples available in both JavaScript and Python.

Pros:

- Full pipeline coverage (STT, LLM, TTS, VAD, turn-taking, interruption handling) in a single API with no external middleware required.

- Extensive model support with the Inworld Router users can select any STT, LLM, or TTS model

- #1 ranked TTS on the Artificial Analysis Speech Arena. Realtime TTS 1.5 Max leads the leaderboard at ~1,208 ELO, with two of the top five models on the arena belonging to Inworld

- WebSocket and WebRTC transports with a shared event model; switch transports without rewriting application logic.

- OpenAI Realtime protocol compatibility with a documented migration path, so existing OpenAI client code transfers with minimal changes.

- Semantic VAD with configurable eagerness for smarter turn detection; energy-based VAD also available.

- Automatic barge-in via interrupt_response: true, with clean interruption recovery.

- Router support for A/B testing voice models, VAD settings, and agent configurations at the API level.

Cons:

- As a full-stack platform, Inworld controls the entire pipeline. Teams that need to swap in a specific third-party STT engine or a particular LLM not offered by Inworld have less component-level flexibility than they would with an orchestration framework like Pipecat.

Best for: Teams that want a single realtime voice API covering STT, LLM, TTS, and orchestration with minimal integration overhead. Especially strong for teams already familiar with the OpenAI Realtime event schema who want additional transport flexibility, built-in routing and A/B testing, and production features like automatic interruption handling and semantic VAD without assembling those capabilities from separate providers. The combination of dual transport support, OpenAI protocol compatibility, session-level control, and experimentation infrastructure makes Inworld the most complete full-stack option for teams evaluating realtime voice AI platforms today, backed by the #1-ranked TTS on the Artificial Analysis Speech Arena leaderboard.

Transport / architecture fit: WebRTC for browser-native voice experiences with low-latency media handling. WebSocket for server-side orchestration, telephony bridges, and explicit event-level control. Both transports work within the same architecture and share the same event model, so teams can deploy hybrid setups (WebRTC on the client, WebSocket on the backend) without maintaining separate codepaths.

Key tradeoff: The most feature-rich full-stack realtime voice AI platform in this comparison, with dual transport support, OpenAI compatibility, built-in orchestration, and TTS quality ranked #1 on the Artificial Analysis Speech Arena (~1,208 ELO), but less established than OpenAI.

2. OpenAI Realtime API

OpenAI's Realtime API supports both WebRTC and WebSocket transports and is documented for low-latency speech-to-speech and multimodal interactions.

Pros:

- OpenAI's event schema has become a de facto compatibility target for other realtime voice AI platforms, so familiarity with this protocol transfers across multiple providers.

- Tight coupling between the language model and the realtime transport layer. Audio input can flow directly into the model without an intermediate STT step when using OpenAI's native multimodal mode, which can reduce pipeline complexity and latency. The API is now GA with MCP and SIP support.

- The WebRTC guide is explicitly framed for browser-based applications, and the WebSocket guide targets server-to-server integrations.

Cons:

- Tight integration with OpenAI's own models means less flexibility to swap in third-party STT, TTS, or LLM components. Limited to 9 built-in voices.

- OpenAI speech models have fallen behind in quality compared to other vendors. On the Artificial Analysis Speech Arena, OpenAI's TTS models rank below Inworld's model, which ranks at ~1,208 ELO.

- Teams that want to use a different language model or a specific TTS voice outside OpenAI's offerings will not be able to use the realtime API.

Best for: Teams building directly on OpenAI's language models who want the simplest path to voice interaction without managing separate STT or TTS providers, and who value protocol familiarity that carries across the broader ecosystem.

Transport / architecture fit: WebRTC for browser-based voice applications. WebSocket for server-to-server integrations. Both are first-class connection methods in the documentation.

Key tradeoff: The tightest model-to-transport integration available for OpenAI models, but that coupling and the limitation to 9 voices limits flexibility for teams that need third-party components, diverse voice options, or the ability to swap LLM providers later.

Why Inworld is the Best Realtime API for Voice AI

For teams that want to apply this framework now, Inworld's Realtime API addresses the core evaluation criteria in a single surface: dual WebRTC and WebSocket transport support, OpenAI Realtime protocol compatibility for low migration friction, semantic VAD with configurable eagerness, automatic interruption handling, and published P90 TTS latency benchmarks (under 250ms for Realtime TTS 1.5 Max, under 130ms for Realtime TTS 1.5 Mini). These latency numbers are paired with the #1 quality ranking on the Artificial Analysis Speech Arena, where Realtime TTS 1.5 Max leads at ~1,208 ELO, validated through blind preference testing by thousands of listeners. That combination of transport flexibility, protocol compatibility, and measurable latency control makes Inworld a strong starting point for teams evaluating full-stack realtime voice AI platforms, particularly those already working with the OpenAI event schema who need production-grade orchestration features without assembling them from separate providers.

Voice AI latency is a solvable problem when you treat it as a sum of measurable parts rather than a single vendor promise.

FAQ

What is realtime voice AI?

Realtime voice AI is continuous, streaming speech-to-speech interaction between a user and an AI agent. The system ingests audio as the user speaks, detects turn boundaries, generates a response through a language model, and streams synthesized speech back, all within a latency window tight enough to feel conversational. The defining characteristic is that pipeline stages (STT, LLM inference, TTS) overlap rather than running sequentially, so the user hears the first audio output while the model is still generating and the TTS engine is still synthesizing.

What is a realtime voice API?

A realtime voice API is a developer interface that handles some or all stages of the streaming speech-to-speech pipeline: audio ingestion, speech recognition, turn detection, language model inference, text-to-speech, and audio delivery. Full-stack realtime voice APIs like Inworld's Realtime API bundle these stages into a single integrated surface, while component APIs (such as Google Cloud's streaming STT or TTS) cover individual stages and require external orchestration to connect them.

What latency is considered good for voice AI?

A well-optimized realtime voice AI pipeline typically achieves 500-800ms end-to-end latency for natural-feeling interactions, though acceptable thresholds vary by use case. Customer support agents can tolerate slightly longer delays, while companion or tutoring applications benefit from staying at the low end. Time to first audio is the most important single metric. For reference, Inworld's Realtime TTS 1.5 Mini delivers under 130ms P90 time to first audio, and Realtime TTS 1.5 Max delivers under 250ms P90. Ask vendors for P90 benchmarks rather than averages, and decompose latency by pipeline stage rather than relying on a single aggregate number. Inworld also holds the #1 quality ranking on the Artificial Analysis Speech Arena, demonstrating that low latency does not come at the expense of voice quality.

When should I use WebRTC vs WebSocket for realtime voice AI?

Use WebRTC when your voice agent runs in a web browser or mobile WebView and users connect over variable network conditions. WebRTC handles audio encoding, adaptive bitrate, jitter buffering, and NAT traversal natively. Use WebSocket when your agent connects through a telephony bridge, runs behind a proxy, or operates in a controlled server environment where you need explicit event-level control over session messages. Many production systems use both: WebRTC on the client for media transport and WebSocket on the server for orchestration and control. Inworld's Realtime API supports both transports with a shared event model, so teams can run hybrid architectures without maintaining separate codepaths.

How is realtime voice AI different from turn-based voice bots?

Turn-based voice bots process audio in discrete, sequential steps: wait for silence, transcribe the full utterance, call a language model, synthesize the complete response, and play it back. Each step finishes before the next begins, creating a noticeable gap between the user's last word and the agent's first. Realtime voice AI overlaps these stages so STT emits partial transcripts while the user is still speaking, the LLM begins generating tokens as soon as the turn boundary is detected, and TTS starts synthesizing from the first tokens rather than waiting for the full text. Realtime systems also support barge-in, allowing users to interrupt the agent mid-response.

Can teams migrate from OpenAI's Realtime API to another provider?

Yes, if the target provider follows the OpenAI Realtime event schema. Inworld's Realtime API is designed as an OpenAI Realtime-compatible system, with a documented migration path that allows existing OpenAI client code to transfer with minimal changes. Inworld extends the base protocol with features like semantic VAD, router support, and dynamic session configuration, but these extensions do not break compatibility with OpenAI-shaped clients. When evaluating any migration target, check whether events like session.update, input_audio_buffer.append, response.create, and response.done are supported with the same semantics.

What should teams look for when evaluating a realtime voice API?

Focus on five areas. First, speech recognition: does the STT engine emit partial transcripts, and what VAD options are available (energy-based, semantic, configurable eagerness)? Second, LLM responsiveness: what is first-token latency, and does the platform support streaming model output to TTS? Third, TTS speed and quality: what is P90 time to first audio, and does the engine stream output? Fourth, interruption handling: can the agent stop speaking and process new input when the user barges in? Fifth, orchestration and observability: does the platform support routing, A/B testing, per-stage latency telemetry, and session replay? Transport flexibility (WebRTC, WebSocket, or both) and protocol compatibility with established event schemas are also practical considerations that affect integration speed.

What makes a voice AI platform ready for production use?

Production readiness goes beyond low latency on a demo. Look for automatic interruption handling and turn-taking that work without custom middleware, configurable VAD that performs well in noisy environments, per-stage observability so you can identify and fix the slowest pipeline component, and routing or A/B testing support so you can iterate on voice models and agent configurations without redeploying infrastructure. Transport flexibility matters because production deployments often span browsers, mobile apps, and telephony channels. Protocol stability and clear API documentation (including session lifecycle, error handling, and authentication patterns) reduce the risk of integration surprises as you scale.